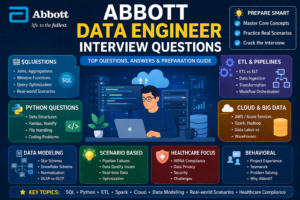

Abbott Data Engineer Interview Questions (Detailed Guide)

Abbott Data Engineer Interview Questions Preparing for a Data Engineer role at Abbott requires a strong understanding of data pipelines, cloud technologies, ETL processes, and healthcare data compliance. Abbott, being a global healthcare company, focuses heavily on data integrity, scalability, and regulatory compliance, making their interviews slightly more domain-focused compared to typical tech companies abbott data engineer interview questions

1. Overview of Abbott Data Engineer Role

Abbott Data Engineer Interview Questions A Data Engineer at Abbott is expected to abbott data engineer interview

- Build and maintain scalable data pipelines

- Handle structured and unstructured healthcare data

- Ensure data quality and compliance (HIPAA, GDPR)

- Work with cloud platforms like AWS/Azure

- Collaborate with data scientists and analysts

2. Technical Interview Questions

A. SQL and Database Questions

1. Write a query to find duplicate records in a table.

Answer:

SELECT column_name, COUNT(*)

FROM table_name

GROUP BY column_name

HAVING COUNT(*) > 1;

abbott data engineer questions and answers Follow-up Questions:

- How do you delete duplicates?

- Difference between

ROW_NUMBER()andRANK()

2. What is indexing and how does it improve performance?

Answer:

Abbott Data Engineer Interview Questions Indexing improves query performance by reducing the amount of data scanned. It creates a data structure (like B-tree) for faster lookup abbott interview questions data engineer

Key Points:

- Clustered vs Non-clustered index

- Trade-off: Faster reads, slower writes

3. Explain normalization vs denormalization.

| Feature | Normalization | Denormalization |

|---|---|---|

| Purpose | Reduce redundancy | Improve performance |

| Use Case | OLTP systems | OLAP systems |

4. What are window functions?

Examples:

SELECT name, salary,

RANK() OVER (ORDER BY salary DESC) as rank

FROM employees;

B. Python & Programming

5. How do you handle large datasets in Python?

Answer:

- Use generators

- Use libraries like Pandas with chunks

- Dask or PySpark for distributed processing

The Ultimate Microsoft Interview Guide 2026

6. Difference between list and tuple

| Feature | List | Tuple |

|---|---|---|

| Mutable | Yes | No |

| Performance | Slower | Faster |

7. Write a Python script to read a large CSV file efficiently.

import pandas as pd

for chunk in pd.read_csv("file.csv", chunksize=10000):

process(chunk)

C. Data Structures & Algorithms

8. What is a hash table?

- Stores key-value pairs

- Provides O(1) lookup

9. Explain time complexity.

Examples:

- O(1) – constant

- O(n) – linear

- O(log n) – binary search

D. ETL & Data Pipeline Questions

10. What is ETL?

- Extract → Transform → Load

11. Difference between ETL and ELT

| ETL | ELT |

|---|---|

| Transform before load | Transform after load |

| Traditional systems | Cloud systems |

12. How do you design a data pipeline?

data engineer interview questions abbott Steps:

- Data ingestion (APIs, DBs)

- Data transformation

- Data storage (warehouse/lake)

- Data validation

- Monitoring

13. How do you ensure data quality?

- Validation rules

- Schema checks

- Duplicate removal

- Logging & alerts

Google Software Engineer Interview Guide (2026 Edition)

E. Big Data Technologies

14. What is Apache Spark?

- Distributed data processing engine

- Faster than Hadoop

15. Difference between Spark and Hadoop

| Spark | Hadoop |

|---|---|

| In-memory | Disk-based |

| Faster | Slower |

16. What is partitioning?

- Divides data for parallel processing

- Improves performance

F. Cloud & Tools

17. What cloud services have you used?

Common answers:

- AWS (S3, Redshift, Glue)

- Azure (Data Factory, Synapse)

18. What is data lake vs data warehouse?

| Data Lake | Data Warehouse |

|---|---|

| Raw data | Structured data |

| Cheap storage | Optimized queries |

19. What is Airflow?

- Workflow orchestration tool

- Used for scheduling pipelines

G. Data Modeling

20. What is star schema?

- Fact table + dimension tables

21. Difference between OLTP and OLAP

| OLTP | OLAP |

|---|---|

| Transactional | Analytical |

| Fast writes | Complex queries |

3. Scenario-Based Questions

22. How would you handle a failing data pipeline?

Answer:

- Check logs

- Identify failure point

- Retry mechanism

- Alert system

23. How do you optimize slow queries?

- Use indexes

- Avoid SELECT *

- Partition tables

- Optimize joins

24. How would you design a real-time data pipeline?

Answer:

- Kafka for streaming

- Spark Streaming / Flink

- Store in NoSQL or warehouse

25. How do you handle missing data?

- Fill with defaults

- Remove records

- Use interpolation

System Design Interview Complete Guide

4. Healthcare-Specific Questions (Important for Abbott)

26. What is HIPAA compliance?

- Protects patient data privacy

- Requires secure data handling

27. How do you secure sensitive data?

- Encryption

- Access control

- Masking

28. What challenges exist in healthcare data?

- Data inconsistency

- Privacy issues

- Large volume

5. Behavioral Questions

29. Tell me about a challenging project.

Structure:

- Situation

- Task

- Action

- Result

30. How do you handle tight deadlines?

- Prioritize tasks

- Break into steps

- Communicate early

Amazon Software Development Engineer interview in 2026

31. Describe a time you worked with a team.

Focus on:

- Collaboration

- Conflict resolution

32. Why Abbott?

Sample Answer:

- Global healthcare impact

- Data-driven innovation

- Career growth

6. Coding/Practical Tasks

33. Reverse a string

def reverse_string(s):

return s[::-1]

34. Find second largest number

def second_largest(arr):

return sorted(set(arr))[-2]

35. Detect duplicates in list

def find_duplicates(arr):

return list(set([x for x in arr if arr.count(x) > 1]))

7. Advanced Questions

36. What is data partitioning vs sharding?

- Partitioning → within system

- Sharding → across systems

37. What is schema evolution?

- Ability to change schema without breaking pipeline

38. What is CDC (Change Data Capture)?

- Tracks changes in database

39. What is data lineage?

- Tracks data flow from source to destination

8. Tips to Crack Abbott Data Engineer Interview

1. Focus on SQL & ETL

- Most questions revolve around SQL optimization and pipelines

2. Understand Healthcare Domain

- Learn basics of HIPAA and healthcare data

3. Practice Real-Time Scenarios

- Pipeline failures

- Data quality issues

4. Learn Cloud Tools

- Azure Data Factory

- AWS Glue

9. Common Mistakes to Avoid

- Not explaining thought process

- Ignoring edge cases

- Weak SQL knowledge

- No understanding of data pipelines

10. Final Preparation Checklist

✔ Strong SQL queries

✔ Python basics

✔ ETL concepts

✔ Cloud fundamentals

✔ Data modeling

✔ Healthcare compliance basics

Frequently Asked Questions (FAQs) – Abbott Data Engineer Interview

1. What is the interview process for a Data Engineer at Abbott?

The typical interview process at Abbott includes multiple stages:

- Initial HR Screening: Basic discussion about experience, salary expectations, and availability

- Technical Round(s): Focus on SQL, Python, ETL pipelines, and data modeling

- Managerial Round: Evaluates problem-solving ability and project experience

- Final Round: Behavioral and culture fit

Some roles may also include a coding test or case study related to real-world data pipelines.

2. What technical skills are required for Abbott Data Engineer roles?

Key skills include:

- Strong SQL knowledge (joins, window functions, optimization)

- Python programming for data processing

- Experience with ETL tools (Airflow, Informatica, Azure Data Factory)

- Knowledge of Big Data tools like Apache Spark

- Familiarity with cloud platforms such as AWS or Azure

- Understanding of data modeling and warehousing concepts

- abbott data engineer interview questions for freshers

3. Is coding important for Abbott Data Engineer interviews?

Yes, but the focus is more on practical coding rather than advanced algorithms.

You should be comfortable with:

- Writing clean Python scripts

- Handling large datasets

- Solving basic data structure problems

- SQL-based problem solving

4. What kind of SQL questions are asked?

SQL is one of the most important areas.

Expect questions like:

- Finding duplicates

- Writing complex joins

- Using window functions

- Query optimization scenarios

- Aggregation and grouping

Interviewers often test both accuracy and performance optimization.

5. Does Abbott ask system design questions?

how to prepare for abbott data engineer interview Yes, especially for mid-level and senior roles.

Common topics:

- Designing data pipelines

- Building real-time streaming systems

- Data warehouse architecture

- Handling large-scale data processing

6. Are cloud technologies important for Abbott interviews?

Absolutely. Abbott increasingly uses cloud-based solutions.

Important services to know:

- AWS (S3, Glue, Redshift)

- Azure (Data Factory, Synapse Analytics)

- Cloud storage and compute concepts

7. What healthcare knowledge is required?

You don’t need deep medical knowledge, but understanding data compliance and privacy is crucial.

Key concepts:

- HIPAA compliance

- Data security practices

- Handling sensitive patient data

8. How can I prepare for ETL-related questions?

Focus on:

- Real-world ETL pipeline design

- Data transformation techniques

- Error handling and logging

- Data validation and quality checks

Try explaining pipelines you’ve worked on in detail.

9. What are common behavioral questions asked?

Some frequently asked behavioral questions:

- Tell me about a challenging data project

- Describe a time you fixed a broken pipeline

- How do you handle tight deadlines?

- How do you work with cross-functional teams?

Use the STAR method (Situation, Task, Action, Result) for best answers.

10. What level of Python is expected?

You don’t need to be an expert, but you should know:

- Data handling with Pandas

- Writing efficient scripts

- File processing

- Basic data structures

11. How difficult is the Abbott Data Engineer interview?

The difficulty level is generally moderate to high, depending on experience.

- Entry-level: Focus on basics (SQL, Python, ETL)

- Mid-level: Real-world scenarios and pipeline design

- Senior-level: System design, scalability, and architecture

12. What kind of projects should I highlight?

Highlight projects involving:

- Data pipelines (batch or streaming)

- Cloud-based data solutions

- Data warehouse implementations

- Automation and performance optimization

Make sure to explain your specific contribution clearly.

13. Do they ask about big data tools like Spark?

Yes, especially if your resume includes it.

Be ready to explain:

- How Spark works

- Difference between RDD, DataFrame, Dataset

- Performance optimization techniques

- Real-world use cases

14. How important is data modeling?

Very important.

You should understand:

- Star schema vs snowflake schema

- Fact and dimension tables

- Normalization vs denormalization

- Designing scalable data models

15. What mistakes should I avoid in the interview?

Common mistakes include:

- Weak SQL fundamentals

- Not explaining your approach clearly

- Ignoring edge cases

- Lack of real-world examples

- Poor understanding of data pipelines

16. How long should I prepare for Abbott Data Engineer interviews?

Preparation time depends on your experience:

- Beginners: 4–6 weeks

- Intermediate: 2–3 weeks

- Experienced professionals: 1–2 weeks (focused revision)

17. Is prior healthcare experience required?

No, but it’s a plus.

Even basic knowledge of:

- Healthcare data systems

- Compliance standards

- Data privacy

can give you an advantage.

18. Do they ask about real-time data processing?

Yes, especially for advanced roles.

You may be asked about:

- Kafka

- Streaming pipelines

- Real-time analytics

- Handling high-volume data

19. What tools should I learn before applying?

Recommended tools:

- SQL (PostgreSQL, MySQL)

- Python

- Apache Spark

- Airflow

- AWS or Azure

- Git

20. How can I stand out in the interview?

To stand out:

- Explain real-world scenarios clearly

- Show problem-solving thinking

- Demonstrate optimization techniques

- Highlight impact (performance improvements, cost savings)

- Communicate confidently

Conclusion

Abbott Data Engineer Interview Questions Preparing for an Abbott Data Engineer interview requires a mix of:

- Strong technical skills (SQL, Python, Spark)

- Real-world data pipeline experience

- Understanding of healthcare data regulations

- Problem-solving and communication skills

If you focus on hands-on practice + real-world scenarios, you’ll significantly increase your chances of success.